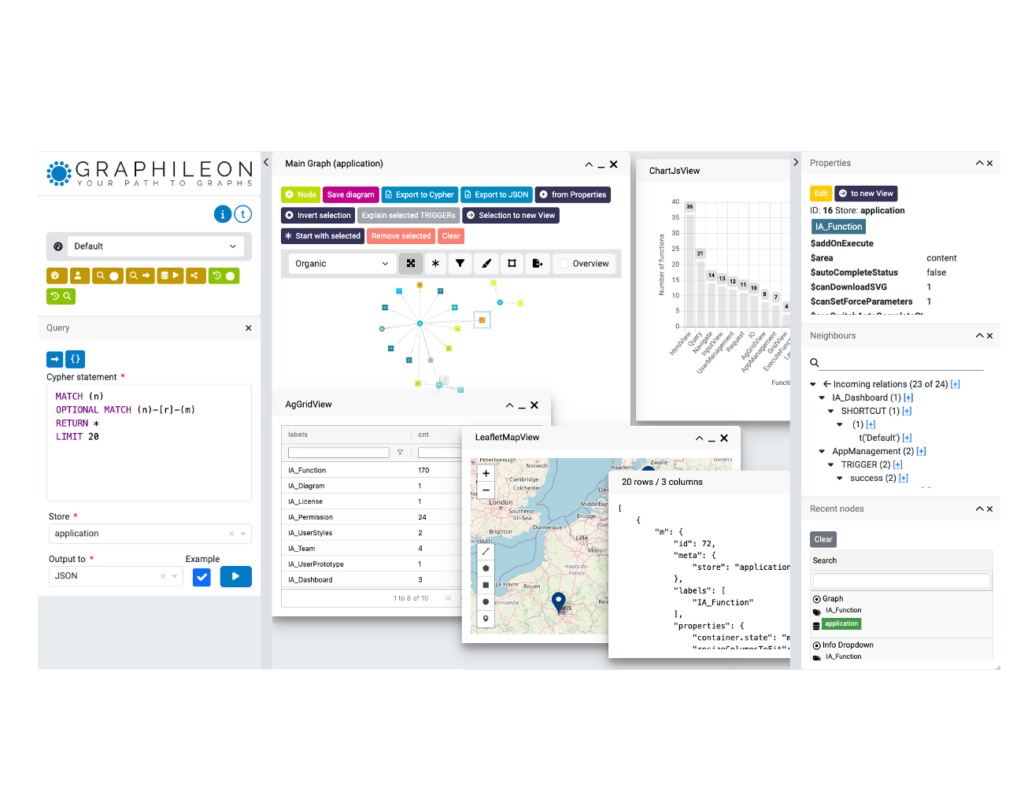

At Graphileon, we store applications as graphs, networks of nodes and edges that represent the flow and structure of entire systems. To execute these application flows correctly, our routing and interpretation logic heavily depends on understanding trigger property keys and their meanings. Accuracy here isn’t optional; it’s foundational to everything we do.

Initially, Ariadne, our LLM-based guide, used a Large Language Model to characterize and categorize trigger property keys. It seemed like a natural fit, an AI system analyzing patterns in data. But as we scaled and refined our platform, we realized this approach had fundamental limitations that didn’t align with our core values.

The Problem with Reasoning About Core Logic

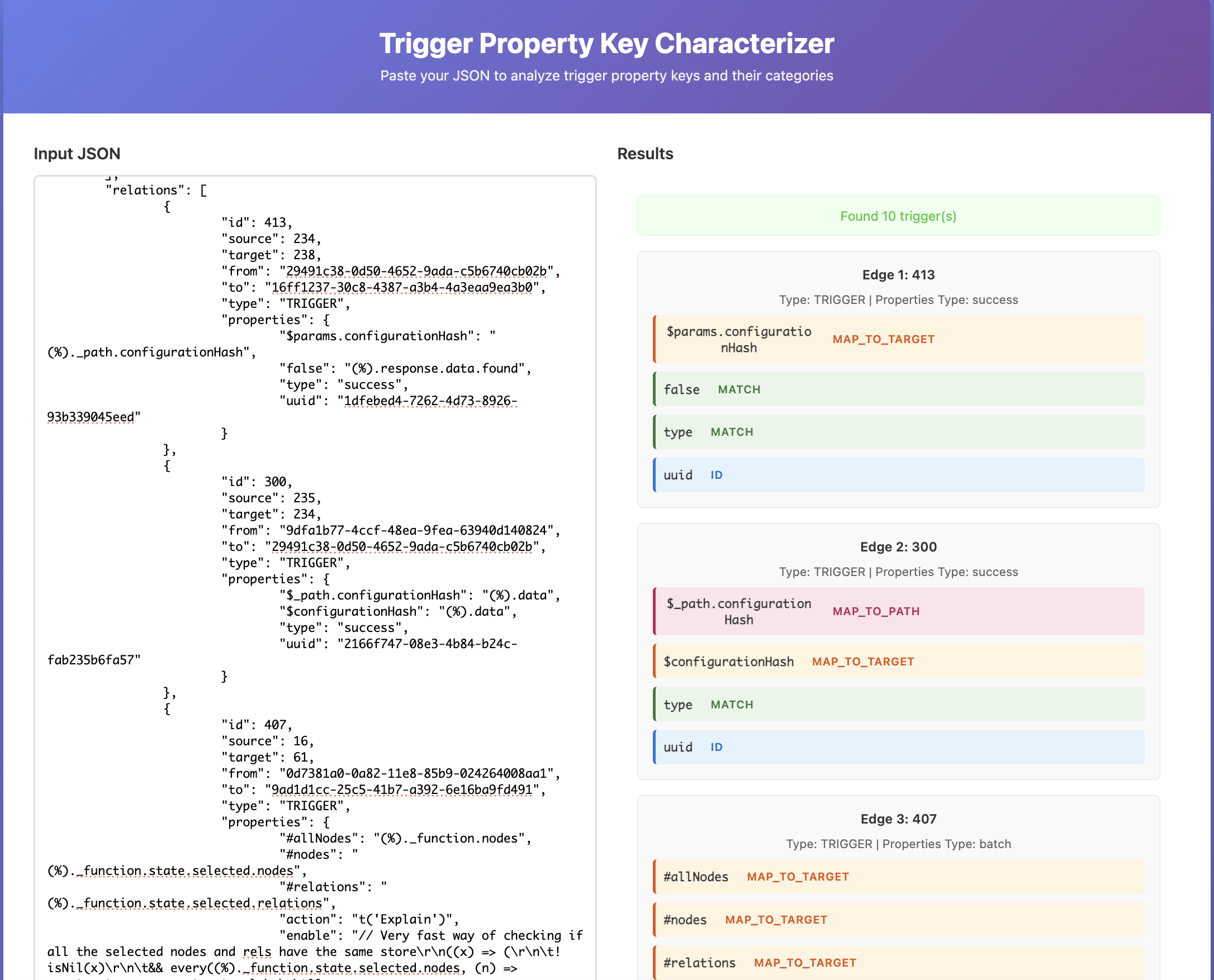

LLMs excel at tasks requiring flexibility and nuance. But trigger property key characterization isn’t about nuance, it’s about precision. Our execution engine needs to reliably interpret the same keys in the same way, every single time, without ambiguity or hallucination. When the core logic of your product depends on consistent interpretation, “good enough” isn’t actually good enough.

We discovered that while our LLM approach worked most of the time, edge cases and inconsistencies occasionally emerged. More importantly, we were asking a neural network to “reason” about something that could be solved deterministically.

But there’s another critical dimension: our customers. When users request explanations of their configurations through Ariadne, they need to trust that what we’re telling them is accurate. If our system mischaracterizes a trigger property key, Ariadne would generate explanations built on that faulty foundation—leading customers to misunderstand their own applications. Accuracy isn’t just a technical requirement; it’s essential to customer understanding and trust. We realized our LLM characterization wasn’t consistently meeting that standard.

The Symbolic Shift

We pivoted to a symbolic approach using our internal logic system. Instead of inference, we now use explicit rule-based analysis to characterize trigger properties. The results speak for themselves:

- 100% accuracy across all trigger characterizations

- Faster execution with no inference overhead

- Lower energy consumption compared to LLM inference

- Reduced operational costs by eliminating model queries

The symbolic system returns perfect results because it follows deterministic rules, there’s no probability, no reasoning, just reliable pattern matching and classification.

Two Lessons Worth Sharing

First: Always check if you can solve it symbolically. Not every problem needs machine learning. Before reaching for sophisticated neural approaches, ask whether the problem can be solved with explicit rules, logic, and symbolic reasoning. If the answer is yes, the results will often be superior.

Second: Never compromise on core logic. Your product’s fundamental mechanisms demand certainty. If something is critical to how your system operates, it should be based on rules you can verify, explain, and guarantee. This is where a symbolic approach earns its place, even if it seems less “cutting-edge” than alternatives.

We’re proud of this shift. It’s a reminder that the most elegant engineering solutions aren’t always the most complex ones. Sometimes they’re the most direct.

Docker

Docker Cloud

Cloud